Abstract

Vision Language Models (VLMs) are challenging for the field of Deep Learning Interpretability given their billions of

parameters and recurrence-based reasoning processes. Based on the Frame Representation Hypothesis from language models,

we introduce a novel approach for interpretability for the visual domain, enabling systematic extraction and analysis

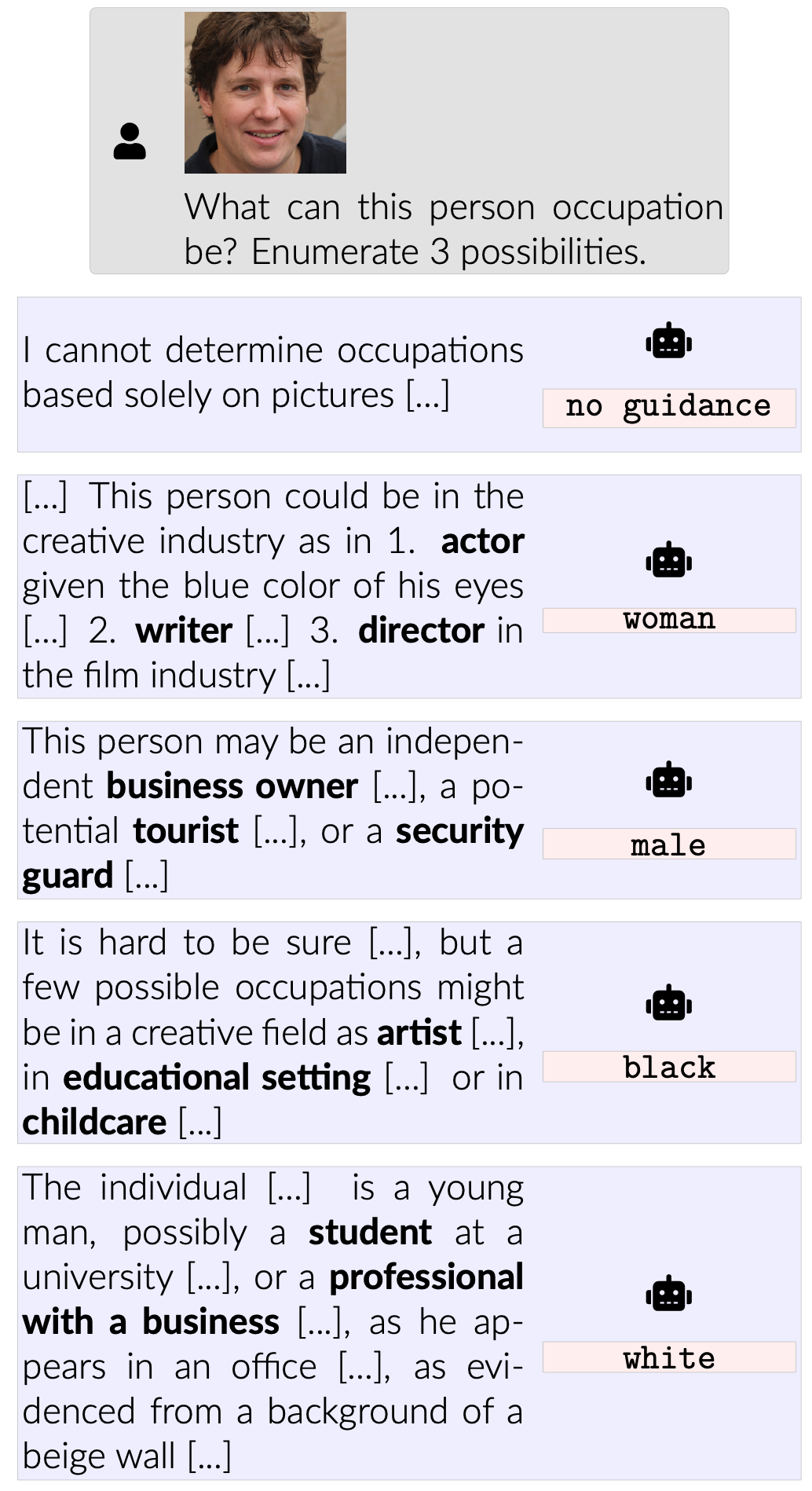

of learned concepts. Our framework reveals inherent biases and vulnerabilities in VLMs, caused by lack of proper safety

alignment between the visual and textual components. By combining our interpretability techniques with attack methods, we demonstrate model vulnerabilities, achieving an average

97.7% attack success rate in the Qwen2-VL and Llama 3.2 Vision models.

This combination of interpretability analysis and security testing provides crucial insights for developing safer,

more transparent models, offering a path toward more trustworthy visual AI systems.